Yesterday, I piloted part of my dissertation study with my very Fabulous Research Assistant (FRA) at Albion College. I tested my protocols for think aloud training (putting together a space puzzle) and for the pre-test activity.

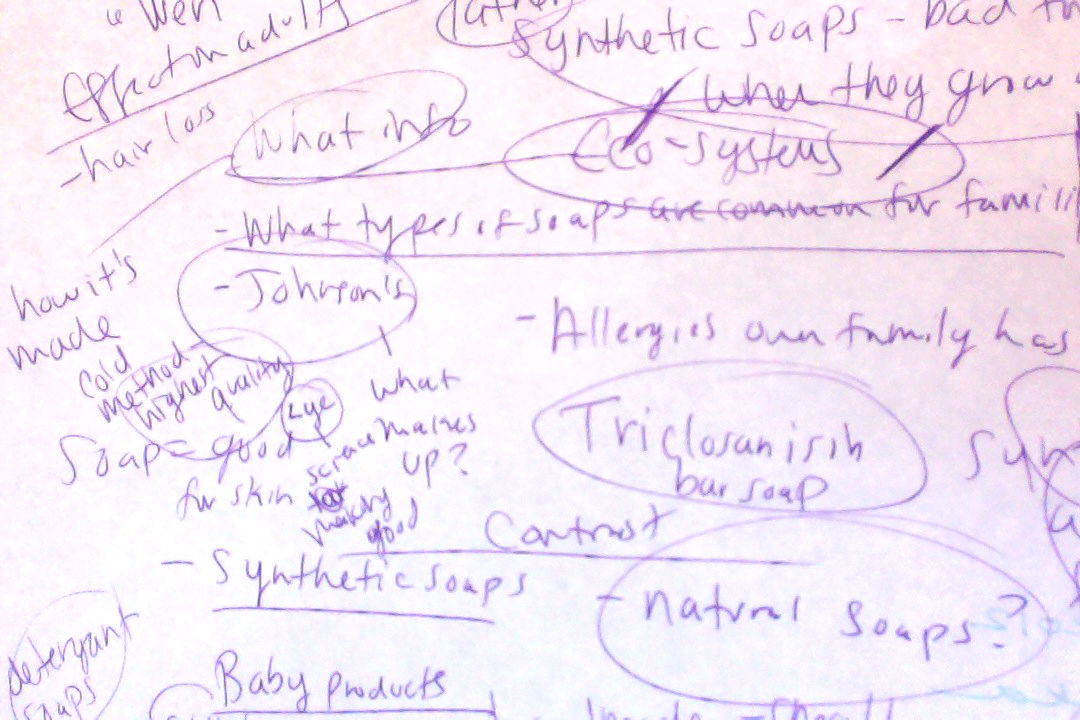

The pre-test prompt that FRA piloted asks students to read about the impact of common household chemicals, and specifically those found in soaps, on child development. Students are encouraged to use any type of Internet text — words, pictures, video, graphs, figures etc. — to learn about the topic. Then, students are asked to write an argument for parents of young children, that might inform their choice to use or not use widely available synthetic soaps.

FRA did an incredible job. The skills and strategies she demonstrated yesterday allowed her to construct a rich and synthesized understanding of the topic. However, despite her rather expert negotiation of the task, I noticed that she did not use any multi-modal sources. No pictures. No video. No graphics of any sort.

As we debriefed the session, I asked her why.

What I learned is, I think, rather important to our broader understanding of synthesis processes with multi-modal Internet texts as a field. She told me that her choice to depend exclusively on text-based information was grounded in several strategic choices. First, she said that because the task was ultimately to construct a written argument, she only used written sources for information. The parallelism between the source genre and the genre she would need to create was important to her. Second, she said that she skims text but that she can’t skim video. Even though she might have been able to find a video with good information within, she chose not to search for video sources because she knew she would be able to glean more information, more quickly, from text.

Though not a perfectly accurate quote, FRA said something like this, “You might watch a video for a minute and then want to skip ahead because it’s not giving you what you need…but then you might skip useful information if you fast-forward through a video…so you have to watch it all.”

In a timed situation (i.e., like in a 45 minute class period at school) it made no sense to FRA to use video. She did note that she would have used info graphics or charts with statistics to help her construct meaning for this task, but it hadn’t actually occurred to her to do so — even though the instructions gave her those options. This finding actually helped me to refine the wording of the prompt — but still, the odds that students might try to use information from images, video or other visuo-graphic representations to construct meaning on a science topic might be pretty slim, particularly given the parameters of the task (which I’m sure are valid, ecologically speaking) and their predispositions or preexisting schemas for this type of task.

What does this mean?

Well, FRA’s thoughts have given me a lot to think about. Eighth grade students who participated in my practicum research told me they didn’t use video because, as FRA also noted, it takes too much time. Several of those students also told me that they didn’t think to use video or even images because they’re not supposed to download media at school — and even though I hadn’t specified that they couldn’t use video or images in the study, the context of the research (school) suggested that such sources would be prohibited or blocked by the network filters.

So, I’m left with the observation that for this type of task, for many logistical reasons, the primacy of print prevails. And yet, I wonder if this isn’t also because children don’t learn to construct meaning from multiple semiotic systems as automatically as they learn it for words? In FRA’s case, there was a very deliberate adherence to text genre — she felt it essential for information source genre and information output genre to align. She went on to say that if the task were to create a powerpoint or a poster or something, she would have looked for images or videos, but not for a written argument. Why would these ideas about text source and text output have anchored her approach to this task so firmly?

What kinds of instruction have children received that would encourage them to seek out meaning from multiple media? Or conversely, what kinds of instruction have dismissed the potential of multi-modal texts for this type of task?

Also, I’m left to wonder whether, for research purposes, videos shouldn’t always come with a transcript and time markers that can be quickly skimmed by would-be viewers? If there were some way to strategically target the information in a video for the task purpose, I think more students might be inclined to use them as an information source.

Have others made similar observations about the use of multi-modal text sources for this type of synthesis task online?

I’d love to hear your thoughts and expertise on the matter.